Research projects spanning audio-visual sensing, robotics, AI, and explainability. Interdisciplinary collaborations across UK, Switzerland, France, Italy, and Korea, combining expertise from computer vision, signal processing, sensors, and AI. Dates indicate my period of involvement.

Multi-Modal Foundational Models and AI Accelerators for Zero-shot Intelligent Surveillance System

Zero-shot intelligent surveillance system leveraging multi-modal foundational models and AI hardware accelerators for scalable, real-time video understanding and scene analysis.

Collaborating Partners (3)

QMUL - Queen Mary University of London, UK

IC - Imperial College, UK

Innodep - Innodep, South Korea

GraphNEx: Graph Neural Networks for Explainable Artificial Intelligence

The project focuses on extrapolating semantic concepts and meaningful relationships from graphs by using concepts and tools from graph signal processing and graph machine learning, while promoting human interpretability. The graph-based framework will adaptively evolve the graphical knowledge base and develop inherently explainable AI. Interdisciplinary project between Queen Mary University of London (UK), EPFL (Switzerland), and ENSL (France).

Project Website

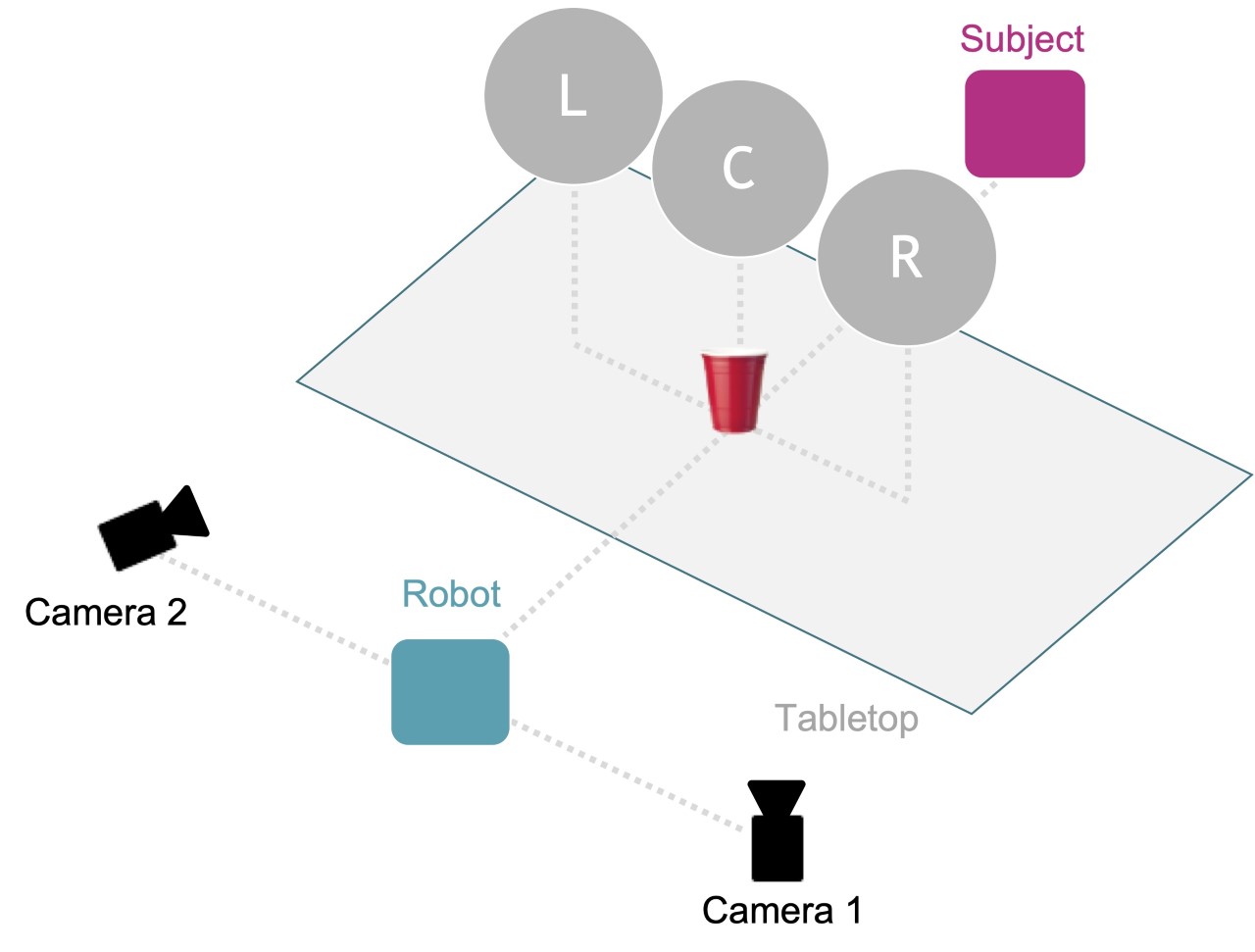

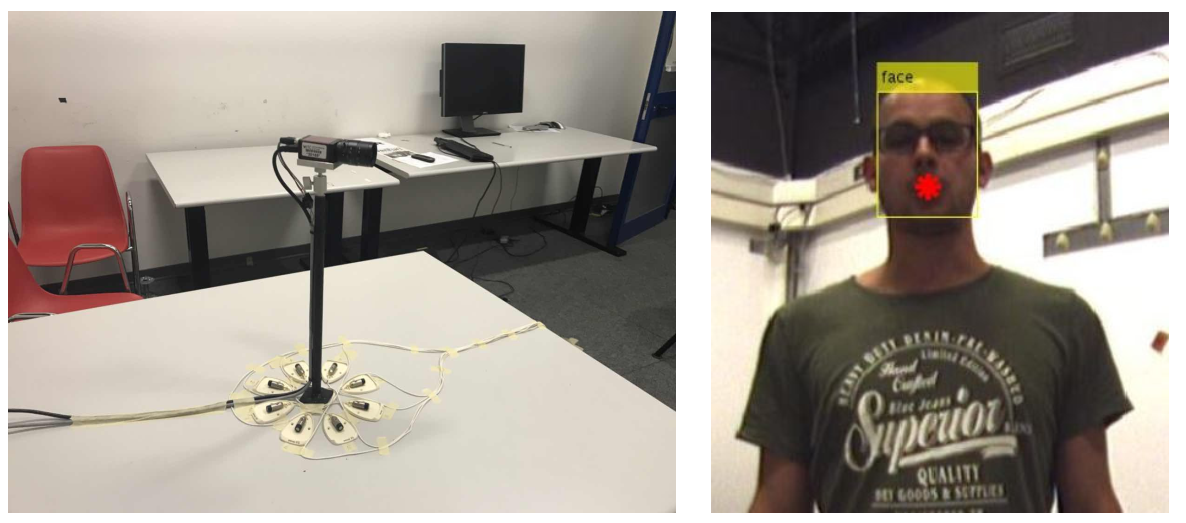

CORSMAL: Collaborative Object Recognition, Shared Manipulation and Learning

Enabling robots to operate in noisy and potentially ambiguous environments in collaboration with humans by exploring the fusion of multiple sensing modalities (sound, vision, touch) to accurately and robustly estimate the physical properties of objects a person intends to hand over to the robot. Benchmarking robotics and perception algorithms for object recognition and manipulation in human-robot handover scenarios. Interdisciplinary project between Queen Mary University of London (UK), EPFL (Switzerland), and Sorbonne Univeristy (France).

Project Website

National Centre for Nuclear Robotics (NCNR)

An interdisciplinary project that brings together a diverse consortium of experts in robotics, AI, sensors, radiation and resilient embedded systems from 8 universities in the UK. Addressing complex problems in high gamma environments, where human entries are not possible at all, or in alpha-contaminated environments, where air-fed suited human entries are possible, but engender significant secondary waste (contaminated suits), and reduced worker capability.

Partner Project Website (no longer avaiilable)

Audio-Visual Intelligent Sensing

Interdisciplinary project to enable mobile audio-visual monitoring for smart interactive and reactive environments by exploring methods for people tracking, activity recognition, acoustic scene analysis, behaviour analysis, distant-speech recognition and understanding applied to individuals as well as groups in multi-camera multi-microphone environments.